Image Annotation and Video Annotation play a vital role in various fields; from computer vision to machine learning. These annotations enable us to understand and interpret visual content. But what if we could add even more meaning to these Annotations? That's where CVAT.ai Label Attributes come into play! In this article, we'll explore how CVAT.ai Label Attributes add an additional semantic layer, enhancing the data via a more specific set of annotations that will improve context and insights.

What is CVAT.ai?

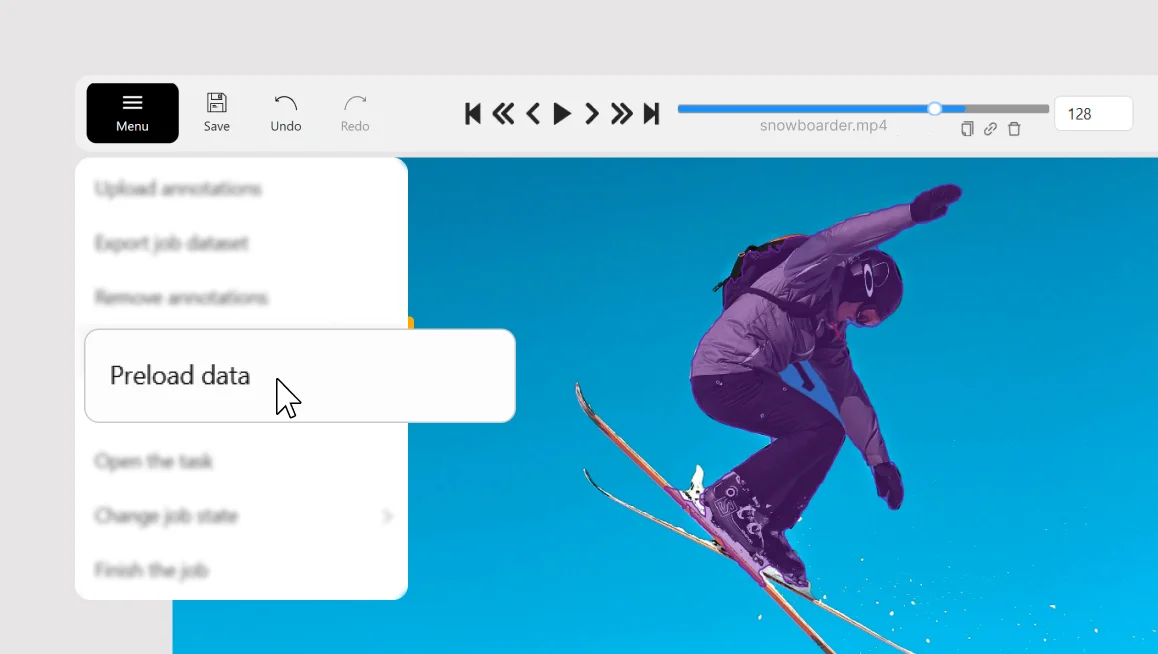

CVAT.ai is a powerful computer vision annotation tool used to mark objects and define their boundaries in images and videos. It simplifies the process of creating annotated datasets, making it easier for machines to understand visual data.

CVAT.ai Attributes

Imagine taking the already efficient CVAT annotation process to a whole new level with CVAT.ai Label Attribute. These Attributes are like descriptive tags or labels that provide additional information about each annotated object. They act as tiny pieces of context, guiding both humans and machines towards better comprehension.

Using CVAT.ai Label Attributes is as simple as it gets. For each annotated object, you can assign relevant Attributes to add more meaning to the Annotation. For instance, if you are annotating Traffic Signs, you can tag the primary Label, Traffic Signs, with attributes like "Stop Sign," "Yield Sign," or even "Damaged Sign" to provide precise details.

Why CVAT.ai Attributes Matter

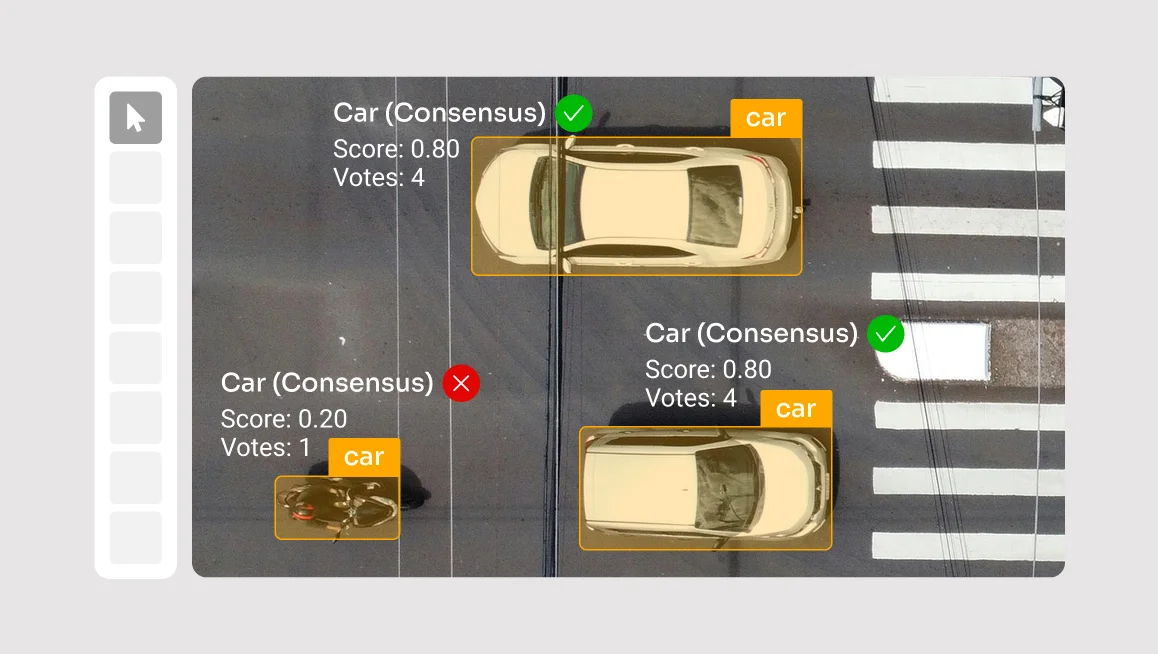

1. Enhanced Context: Imagine annotating a dataset with just bounding boxes around cars that have a simple, single Label. With CVAT.ai Label Attributes, you can add labels like "SUV," "Sedan," or "Convertible." This additional context empowers ML algorithms to better recognize different car types, leading to more accurate model results.

2. Improved Analysis: When dealing with complex data, such as medical images, CVAT.ai Label Attributes enable annotators to include critical details like "Benign Tumor," "Malignant Tumor," or "Inflammation." This extra layer of information allows researchers medical-centric ML algorithms to perform more insightful analysis and make better-informed decisions.

3. Enriched Machine Learning: ML models thrive on data diversity. With CVAT.ai label attributes, you can feed the model with richer data that goes beyond simple annotations. This exposure to varied Attributes helps to train the model to be more adaptive and versatile.

And many more!

Want to know more about how to use them? Watch the video!

And share your feedback!

Happy annotating!

Do not want to miss updates and news? Have any questions? Join our community:

.svg)

.png)

.png)

.png)