CVAT already has impressive automatic annotation abilities with its built-in models. Today, we announce that we further advanced them by adding third-party DL models from Hugging Face and Roboflow. This integration has the potential to increase annotation speed by an order of magnitude, depending on the model. In this article, we show how these models can improve your annotation process.

Introduction to Hugging Face and Roboflow

Hugging Face and Roboflow are very popular online platforms providing ready-to-use services for artificial intelligence.

Hugging Face provides a large collection of pre-trained machine learning models for Natural Language Processing (NLP) and Computer Vision. It offers an API for the models, and integrates with various deep learning frameworks for various applications. The platform provides a way for developers and researchers to quickly experiment with different models and use them for tasks such as sentiment analysis, text classification, language translation, and image classification, among others.

Roboflow is a comprehensive platform for managing and preprocessing datasets and models. It has a wide range of models to meet different needs and requirements, including object detection, image classification, image segmentation, and many more. Whether you're working on a simple task or a complex project, you can use one of more than 7000 pre-trained models, and just focus on your projects instead of training your own models from scratch.

CVAT leverages the strengths of both Hugging Face and Roboflow by integrating their models into its platform, resulting in an efficient and flawless data annotation workflow.

CVAT integration with Hugging Face and Roboflow

The integration of Roboflow and Hugging Face models into CVAT has unlocked boundless potential for data annotation. With the convenience of the CVAT interface, you can now harness the power of the leading models and annotate your data at lightning speed. Adding these models to CVAT is now a breeze, thanks to the user-friendly interface designed for that purpose.

To add a model from Roboflow, there are a few requirements that must be met. Firstly, create an account, then you will need to get the model URL and the API Key, both of which can be found in the Roboflow Universe.

Simply locate the desired model, but keep in mind that CVAT integration only supports image classification, object detection, and image segmentation models.

For optimal testing and experimentation with the new feature, we suggest trying the following list of models:

- License Plate Recognition Detection: a pre-trained model for license plates recognition.

- Hard Hats Detection: designed to detect faces.

- Face Detection Detection: pre-trained model for face detection.

- Mask Wearing Detection: designed to detect faces in masks.

- Or use search.

Click on the model’s name and scroll down to the Hosted API. That's how you will obtain the model URL and the API key.

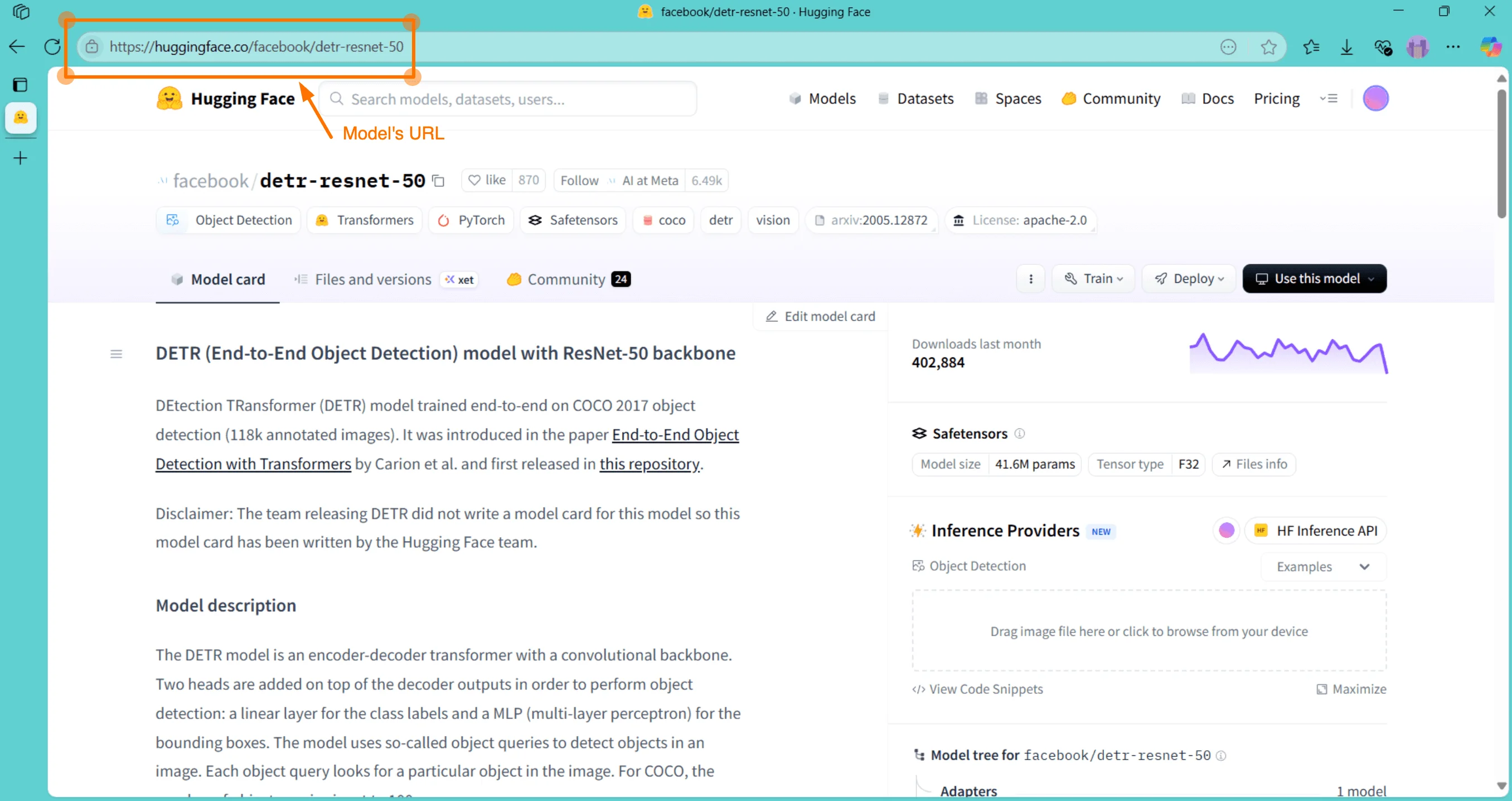

To integrate Hugging Face models with CVAT, first create an account on the Hugging Face website. Access your User Access Token from the settings page once you have logged in. Choose a model from the list of available models. Same as for the Roboflow, keep in mind that CVAT integration only supports image classification, object detection, and image segmentation models. Once you’ve clicked on the model’s name, you will get the model URL.

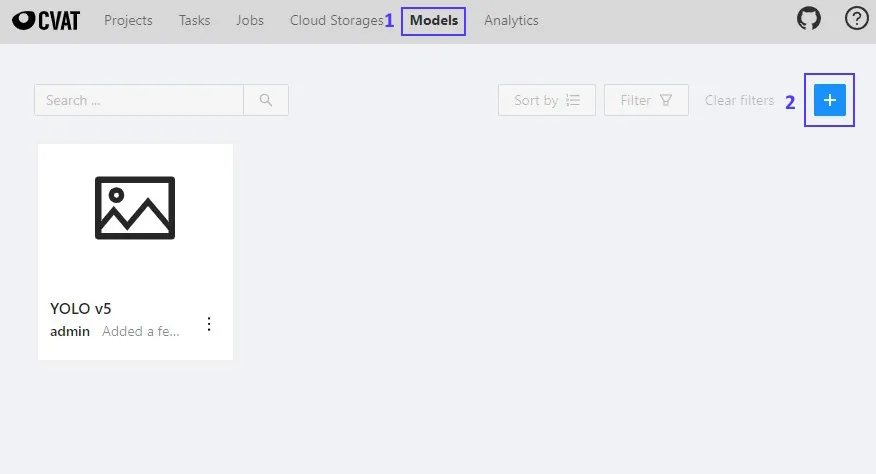

To add a model to CVAT, first log in and navigate to the Models section. From there, click the Add New Model (+) button and proceed to input the model URL:

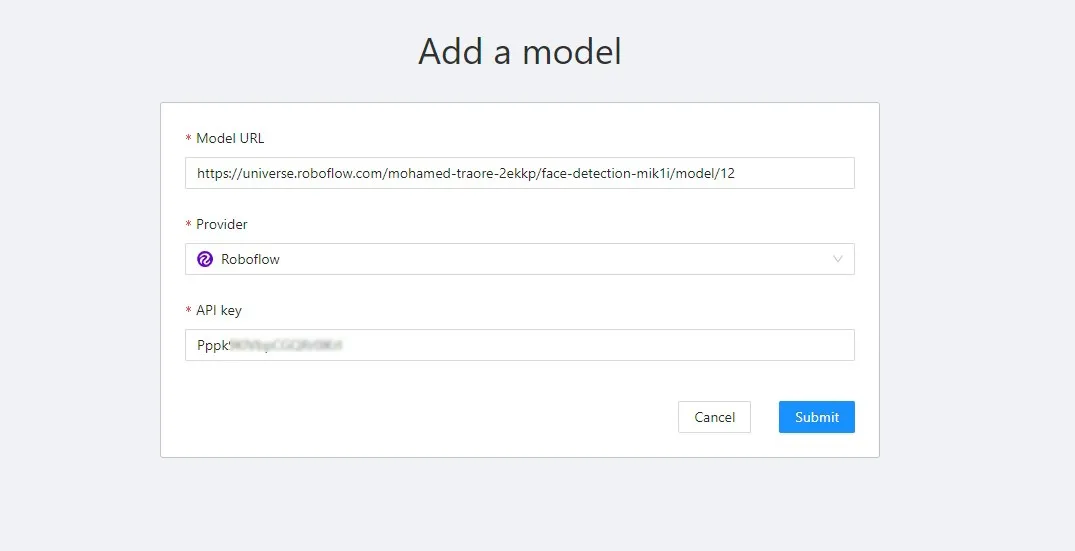

Once the model URL has been added, CVAT will automatically detect the provider. The final step is to enter the API key (or User Access Token if you are using Hugging Face) and click Submit:

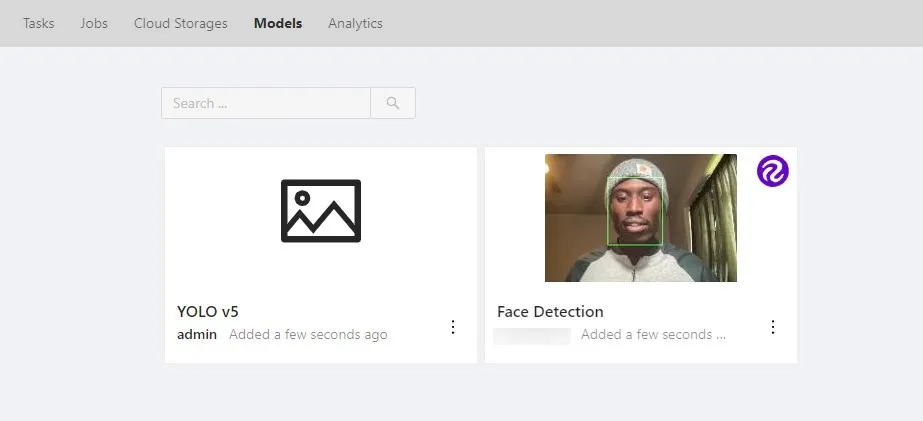

The model will appear on the Models page:

Click on it to see the predefined labels:

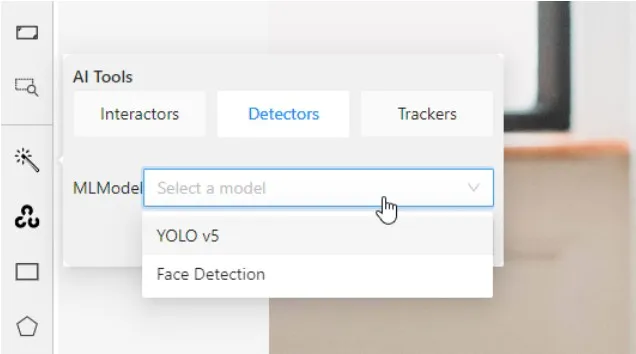

Now all that is left is to create a task with the model’s predefined labels, and it will then be accessible from the CVAT tools for both manual annotation of individual images and automatic annotation of multiple images or videos:

And you can start annotating:

To wrap it up: the combination of Hugging Face, Roboflow, and CVAT is a game-changer for computer vision projects, offering the convenience and versatility of pre-trained models from all platforms. The integration of these tools results in a flawless and intuitive annotation process.

.svg)

.png)

.png)

.png)