Building a high-performing machine learning model is often compared to constructing a house, where the final structure is only as strong as the foundation it sits on. In the context of AI, that foundation is your dataset. Every piece of data must be carefully annotated, so the model understands the underlying patterns, classes, and spatial or semantic relationships within it.

Once your data is labeled, the next question is the file format in which those annotations are stored. Even a meticulously labeled dataset is of little use if your model’s training pipeline cannot parse the format it arrives in. That is why choosing the right format is a critical factor impacting training efficiency, model compatibility, and future scalability.

While there are many annotation formats available, selecting the optimal one requires understanding your model architecture, deployment environment, and the specific geometries and metadata your project demands.

In this guide, we will explore how dataset format requirements vary by data type, break down the most common annotation formats, discuss niche formats worth knowing, and explain why your choice of format matters far more than most teams realize.

Dataset Formats by Data Type

Before choosing a specific file format, teams must identify the precise structure of their raw data. Annotation requirements vary significantly by data type, as each medium demands unique geometric, temporal, or semantic structures.

Image-Based Datasets

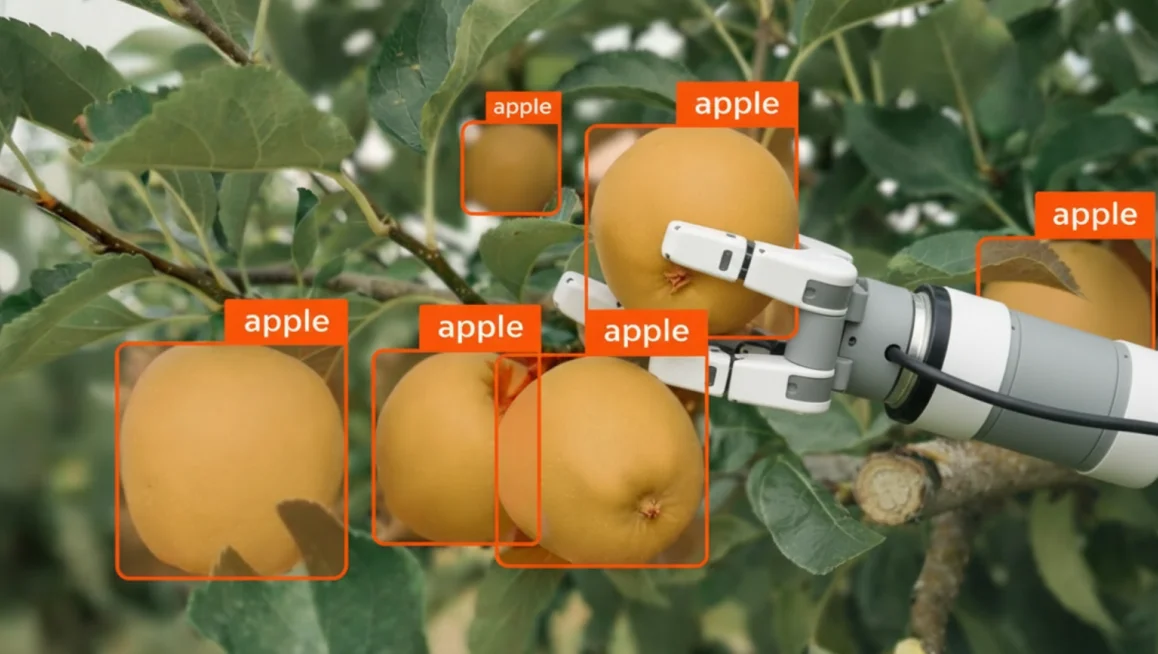

For tasks like object detection, segmentation, and classification, teams rely on various methods of image annotation to label static visual data. Each of these specific computer vision tasks requires a distinct file format capable of encoding the necessary coordinate or categorical metadata.

- Standard Formats: COCO JSON, YOLO TXT, Pascal VOC XML, and ImageNet.

- Key Characteristic: These formats efficiently map 2D spatial coordinates (bounding boxes, polygons) and categorical labels to static pixels. They are universally supported by modern computer vision frameworks.

Because different computer vision architectures process annotations uniquely, the choice of an image-based dataset format depends directly on the specific machine learning task:

Video-Based Datasets

To train models on motion and continuity, specialized workflows for video annotation are required to handle sequential frame data. Because these systems track objects across time, the chosen video-based dataset format must be able to preserve complex temporal relationships and frame-to-frame continuous indexing.

- Standard Formats: MOT (Multiple Object Tracking) CSV, CVAT Video XML, and tracking-focused COCO JSON.

- Key Characteristic: Video annotation requires formats that preserve temporal continuity across frames. Without strict frame-referencing and object IDs, a model cannot maintain identity tracking or learn movement vectors over time.

Tracking objects and maintaining structural continuity over time means that video-based dataset formats must adapt to specific motion-based tasks:

Point Cloud-Based Datasets

Applications in robotics and autonomous driving depend on point cloud annotation to process complex three-dimensional environments. Managing these datasets demands specialized spatial formats that can encode depth data and sensor fusion matrices without slowing down data ingestion pipelines.

- Standard Formats: KITTI BIN/TXT, PCD (Point Cloud Data), and nuScenes JSON.

- Key Characteristic: Built for autonomous systems, these file formats encode depth, 3D spatial geometries (cuboids), sensor fusion metadata, and semantic segmentation across millions of X, Y, Z coordinate points.

Audio Datasets

For systems dealing with speech processing or sound event detection, standard audio annotation processes are used to isolate specific signals. These pipelines rely on audio-based dataset formats that prioritize precise time-interval alignments and sample-rate metadata over visual or structural coordinates.

- Standard Formats: Audacity Label TXT, Praat TextGrid, and custom WAV/JSON metadata pairs.

- Key Characteristic: Audio annotation maps timestamps to acoustic events or phonetic labels. Unlike visual formats, audio schemas must prioritize time-interval alignments and sample-rate metadata rather than spatial coordinates.

Whether analyzing linguistic phonemes or raw ambient acoustics, the chosen audio-based dataset format is determined by the downstream machine learning application:

The Big Three Annotation Formats

While dozens of specialized annotation structures exist, three open-source dataset file formats have become the dominant standards for training computer vision models. Selecting among these formats requires balancing architectural compatibility, metadata complexity, and pipeline parsing speeds.

COCO (Common Objects in Context)

COCO is a highly structured, JSON-based format optimized for deep, centralized dataset management. Originally developed by Microsoft, it acts as the primary standard for complex multi-task vision pipelines and is supported by many annotation tools and model training frameworks.

Data structure: A single, global JSON file stores all image metadata, class categories, and coordinate arrays for the entire dataset split.

Strengths:

- Natively maps intricate, overlapping geometries including bounding boxes, segmentation polygons, and skeletal keypoints within one file.

- Centralizes complex metadata schemas, hierarchical class relationships, and image-level attributes.

- Facilitates multi-task learning, allowing classification, detection, and keypoint models to ingest the same file.

Limitations:

- Global JSON structures scale poorly in memory, making file parsing highly resource-intensive for multi-million image datasets.

- Verbose nesting makes manual debugging, manual text editing, or file version control difficult.

- Modifying or removing a single image sample requires rewriting the entire monolithic JSON schema.

COCO is the ideal choice when a project requires complex geometries, rich metadata, or multi-task learning on static images. If a pipeline requires a model to simultaneously perform object detection, instance segmentation, and keypoint estimation on a single dataset, COCO’s unified JSON schema provides the necessary underlying structure.

YOLO (You Only Look Once)

YOLO dataset formats prioritize structural simplicity and minimal storage overhead. Engineered alongside the YOLO real-time object detection ecosystem, this format minimizes training pipeline preprocessing bottlenecks.

Data structure: A distributed file system featuring one plain text (.txt) file per image. Each row inside the file represents a single object instance following a strict space-separated format: [class_id normalized_x_center normalized_y_center normalized_width normalized_height].

Strengths:

- Ultra-lightweight payload footprint ensures maximum parsing speed and minimal RAM overhead during training loops.

- Distributed per-image structure simplifies data streaming, parallel processing, and individual sample deletion.

- Modern implementations (e.g., Ultralytics) expand the format to support instance segmentation by swapping four bounding box metrics for trailing coordinate sequences (x1 y1 x2 y2...).

Limitations:

- Standard YOLO syntax strictly forbids custom metadata, individual confidence tags, or image-level global attributes.

- Coordinate normalization relative to image boundaries can introduce minute mathematical rounding errors during cross-format conversion.

- Lacks native hierarchical clustering, making complex parent-child class structures impossible to declare inside the text line.

YOLO is the ideal choice when training pipeline speed is the priority and the primary machine learning task is bounding box detection. If a team is deploying models to resource-constrained edge hardware or requires rapid data iteration cycles, the minimal overhead of the YOLO text structure offers a distinct advantage.

Pascal VOC

Pascal VOC is an XML-based metadata standard originating from early computer vision benchmarking challenges. Though less frequent in state-of-the-art pipelines, it remains deeply embedded in legacy systems and custom industrial inspection software.

Data structure: A distributed file system assigning one XML document (.xml) to every corresponding image sample.

Strengths:

- XML tag hierarchies provide excellent human readability, allowing immediate inspection using basic text editors.

- Explicitly documents complete image metadata inside each individual file, including pixel height, width, depth channels, and folder paths.

- Easily parsed by simple custom script parsers without importing heavy external serialization libraries.

Limitations:

- Highly verbose markup text leads to unnecessarily inflated file storage sizes at production scale.

- Standard VOC XML schemas cannot store complex segmentation polygons; pixel-wise segmentation requires managing a separate, color-coded PNG mask matrix.

- Total absence of tracking ID tags makes the format entirely incompatible with temporal video workflows.

Pascal VOC remains a practical choice for smaller datasets, rapid prototyping, or workflows that require direct human inspection of the metadata. When a team needs to manually verify, audit, or patch individual annotations without relying on specialized parsing tools, the native readability of the VOC XML file structure serves as a useful asset.

Format Comparison at a Glance

The table below summarizes the key differences between the three most common formats to help you make a quick, informed decision.

Specialized Dataset Formats Examples Worth Knowing

Beyond the dominant standards, several niche dataset formats are tailored for specific industry domains or specialized data types. Selecting these formats is often driven by distinct project constraints, such as autonomous driving sensory data or complete format interoperability.

Cityscapes

The Cityscapes file format specializes in urban scene understanding with dense, pixel-level labels. Rather than using coordinate boxes, it uses a multi-directory system that pairs raw images with color-indexed PNG pixel masks and corresponding JSON files to categorize environmental elements like "road," "pedestrian," or "vehicle."

- Key Advantage: Built uniquely for continuous, edge-to-edge semantic and instance segmentation.

- Key Limitation: High storage overhead and complex directory layouts make it entirely unsuited for general 2D bounding box tasks.

This format is the ideal choice for autonomous vehicle perception, urban robotics, and semantic scene-parsing models that require dense, pixel-by-pixel class identification.

KITTI

The KITTI format focuses on autonomous driving benchmarks by bridging the gap between 2D camera sensors and 3D spatial environments. It utilizes space-separated text files containing 15 values per row to encode 3D bounding boxes (cuboids), rotational orientation, and sensor calibration matrices.

- Key Advantage: Natively integrates depth, spatial orientation, and camera-to-LiDAR sensor fusion data.

- Key Limitation: Highly rigid data schema that cannot easily accommodate custom metadata attributes or 2D segmentation polygons.

KITTI is the ideal choice for autonomous vehicle systems combining camera imagery with LiDAR point clouds, 3D object detection, and multi-object tracking.

Datumaro

Datumaro is an open-source dataset management framework developed alongside CVAT. It acts as a comprehensive, unified JSON-based directory system engineered to preserve every available annotation geometry—from bounding boxes and skeletons to ellipses and dense masks—without data loss during pipeline ingestion.

- Key Advantage: Total preservation of data provenance, annotation geometries, and complex metadata layers.

- Key Limitation: Major framework training loops do not support it natively, requiring an intermediate translation step before model training.

Datumaro is the ideal choice for establishing high-fidelity ground truth benchmarks and acting as an intermediate, lossless storage format before converting annotations into task-specific architectures like YOLO or COCO.

Why Your Annotation File Format Matters More Than You Think

The chosen data schema influences the performance and stability of the entire machine learning pipeline, far beyond a simple final export step. A structural mismatch between annotation storage and the training pipeline can lead to severe operational bottlenecks, hours of debugging, or catastrophic downstream model failures.

The choice of an annotation format has a direct, measurable impact on three core areas of the machine learning lifecycle:

1. Model Compatibility and Training Integrity

Model architectures are fundamentally bound to specific mathematical coordinate structures, though modern training frameworks often support multiple input formats. For instance, advanced frameworks like Detectron2 can ingest various native structures or compile them internally, while others, like the Ultralytics framework, strictly default to normalized YOLO TXT coordinates. However, underlying vision ecosystems (such as standard PyTorch or TensorFlow templates) still heavily depend on the absolute pixel coordinates standardized by COCO JSON.

Failing to align these expectations forces teams to rely on custom coordinate-conversion scripts. Minor bugs in these scripts frequently introduce silent tracking errors—such as bounding box shifts, inverted coordinate origins, or class index rotation—that degrade model accuracy without throwing fatal code errors.

2. Tooling Interoperability and Pipeline Maintenance

The choice of format dictates which data loaders, augmentation frameworks (like Albumentations), and model visualization tools can be used natively out of the box. Opting for a universally supported format eliminates the need to write and maintain complex, custom preprocessing code.

Furthermore, if a pipeline leverages automated data labeling, model-assisted annotation, or programmatic quality assurance checks, selecting an industry-standard format ensures the dataset remains fully compatible with third-party automation tools without requiring constant data restructuring.

3. Scalability, Infrastructure, and Version Control

The underlying file architecture (monolithic JSON vs. distributed TXT or XML) dramatically alters data engineering pipelines as a project scales from thousands to millions of samples:

- Distributed Formats (YOLO TXT, Pascal VOC XML): Storing one file per image simplifies object-level or image-level version control via Git. It also allows for efficient data streaming and parallel data loading during training loops.

- Monolithic Formats (COCO JSON): Centralizing data into a single file makes small datasets easy to distribute. However, at production scale, multi-gigabyte JSON files become massive bottlenecks, as they consume excessive RAM and slow down pipeline initialization during parsing.

Common Mistakes When Choosing a Format

Selecting an incompatible dataset structure is a frequent and costly mistake in machine learning engineering. Failing to audit data schemas early in the project lifecycle can introduce severe architectural bottlenecks that often require complete dataset re-processing.

When establishing data labeling guidelines, engineering teams must guard against four prevalent structural errors:

1. Prioritizing popularity over task alignment

Selecting a dataset format based solely on its industry adoption rather than its geometric compatibility creates immediate pipeline inefficiencies. For example, while COCO JSON is a dominant industry standard, deploying it for a straightforward 2D bounding box detection task introduces unnecessary parsing overhead, inflated storage requirements, and memory bloat. Conversely, attempting to force complex multi-task labels into lightweight structures restricts a model's capacity to learn.

2. Ignoring your annotation tool's export capabilities

Failing to validate the precise data translation paths between an annotation platform and a training framework frequently causes silent data loss. Many native tool exporters strip out critical custom metadata attributes, confidence metrics, or annotator tags during serialization. If these pipelines are not rigorously tested with a small sample split before full-scale labeling begins, critical data provenance layers can be permanently discarded.

3. Misjudging structural geometry constraints

A frequent architectural error is assuming that all annotation formats natively support all geometric shapes. Document structures are mathematically rigid; for example, attempting to export complex multi-point segmentation polygons into a legacy YOLO text file will automatically strip away the boundary geometry. Because the legacy line syntax strictly accepts only four normalized bounding box coordinates, the pipeline will compress the polygon into a standard box, destroying fine-grained semantic features.

4. Disregarding production-scale storage architecture

Choosing a format based on prototyping convenience rather than long-term infrastructure capacity causes severe bottlenecks as datasets expand. Highly verbose markup systems like Pascal VOC XML function well for datasets containing thousands of images. However, when scaled to millions of operational production samples, managing millions of individual XML files creates catastrophic disk I/O bottlenecks, inflates cloud storage costs, and severely delays training loop initialization.

Can You Switch Dataset Formats Mid-Project?

Altering a dataset's format structure after data collection has begun is a common operational necessity, typically triggered by shifts in model architecture or changes in tooling infrastructure. While format migration is entirely possible using open-source scripts found across the internet or dedicated programmatic conversion frameworks, the process is rarely mathematically seamless or without risk.

The primary technical hazard during mid-project migration is irreversible data loss. Down-converting a comprehensive schema like COCO JSON—which maps complex polygonal boundaries and spatial keypoints—into a legacy bounding-box format like classic YOLO text files permanently strips away the high-fidelity geometry.

Furthermore, translating data between fundamentally different spatial coordinate systems introduces severe mathematical vulnerabilities. For example, converting absolute pixel-based integer boundaries (Pascal VOC XML) into normalized floating-point coordinate structures can introduce minute rounding errors and boundary precision drift. These compounding mathematical discrepancies can destabilize loss functions during training, a phenomenon deeply studied since early advancements in real-time object detection outlined in the foundational YOLO research paper.

To mitigate the risks of pipeline fragmentation, mature machine learning workflows implement a decoupled storage strategy. Rather than configuring the core annotation layer to match a specific, immediate model requirement, mature teams export their data into an annotation tool's native, "universal" format—such as CVAT’s Datumaro standard—or a comprehensive master format like COCO JSON.

By centralizing the ground truth within a rich schema that fully captures all metadata and complex geometries, teams create a future-proof data repository. Specialized, lightweight target formats (such as YOLO text variants) can then be generated programmatically as ephemeral outputs during the training pipeline's preprocessing phase, completely eliminating the risk of destructive data degradation.

The Proprietary Schema Alternative

When open-source dataset formats fail to meet project constraints, engineering teams frequently develop proprietary, custom JSON or XML schemas. Because annotations are fundamentally serialized text data, teams are rarely permanently locked into an existing standard if they possess the engineering resources to write custom parsing engines.

However, designing and maintaining a bespoke internal format introduces long-term code overhead, requires custom integration hooks for automated data loaders, and breaks out-of-the-box compatibility with standard visualization and augmentation libraries.

How CVAT Simplifies Dataset Export

Navigating the granular complexities of machine learning schemas is significantly simplified by deploying enterprise-grade tooling. The Computer Vision Annotation Tool (CVAT) natively abstracts these formatting friction points by supporting over 20 distinct dataset export structures. It covers everything from the core frameworks (COCO, YOLO, Pascal VOC) to highly domain-specific formats like Datumaro, MOT, CamVid, Cityscapes, KITTI, and the complete Ultralytics YOLO ecosystem.

The platform’s data compilation engine provides critical infrastructural flexibility across three distinct functional dimensions:

Granular Structural Control

Data pipelines can be exported at multiple administrative tiers depending on project scale. Teams can extract data globally at the Project level to merge multi-task annotations, isolate structural datasets at the Task level via Actions > Export task dataset, or pull highly isolated samples at the Job level via Menu > Export job dataset. This tiering isolates sub-tasks effectively when distinct annotator groups manage localized data segments.

Lossless Metadata Preservation

By incorporating unified framework schemas like Datumaro, the platform allows teams to archive intricate visual attributes, polyline tracking vectors, skeleton keypoints, and nested data provenance layers without risk of schema truncation. This preserves a single source of truth before data is compiled down to lightweight, model-specific production formats.

Synchronized Media Archiving

For teams requiring consolidated dataset distribution, the interface features a Save images toggle during the serialization process. Utilizing this option wraps the raw image matrices and the corresponding formatted text or JSON annotation coordinates into a single, perfectly synchronized cloud archive, eliminating the pipeline risk of decoupled media references.

Selecting an annotation format is not a trivial post-processing step; it is a foundational architectural decision that dictates training efficiency, infrastructure scaling costs, and overall pipeline stability. Aligning a dataset's underlying format with the mathematical expectations of the target model architecture ensures long-term framework compatibility and prevents costly manual debugging workflows.

Ready to get started?

CVAT Online lets you get started immediately in the browser, with no installation required. It provides quick access to these export features, so you can begin evaluating your workflows right away.

CVAT Enterprise is built for teams requiring self-hosted labeling setup and annotating at scale. It adds dedicated support, enterprise security options including SSO and LDAP, and collaboration and reporting features that help large production teams maintain quality and throughput across complex annotation projects.

Alternatively, if your team needs production-ready training data without building an annotation operation in-house, choose CVAT Labeling Services. With 300+ expert annotators across 12 time zones, CVAT's managed labeling team handles everything from project scoping and annotation to quality assurance and delivery.

.svg)

.png)

.png)

.png)